|

March 2019

AutomatedBuildings.com

|

[an error occurred while processing this directive]

(Click

Message to Learn More)

|

The Software

The

most important part of an Edge Controller is the software. It’s far

more important to have high quality, reliable, maintainable, and

extensible software than anything else. If given a choice between great

software and only decent hardware, the emphasis should definitely be on

the former.

Heterogenous

System Architecture SoCs is moving

forward at a rapid

pace. Consider the recent announcement by silicon giant ST, who is now

jumping into the apps processor market with the STM32MP1. This new

hybrid Cortex-A7 & M4F chip offers the relatively high-throughput

multitasking capability from its dual A7 cores, combined with the more

deterministic 3-stage pipeline of an M4 microcontroller core, for

performing critical real-time tasks. This device is very similar to

NXP’s offering the i.MX7D which made its debut a year or two ago.

A

decent edge controller only requires the bare minimum processor which

has hardware support for multithreading/multitasking and memory

virtualization. As long as we have controller hardware based on

such a

device, we have the platform to build a great system. Such a controller

can possibly serve for decades without needing replacement. As

mentioned previously, edge controllers use full operating systems. This

gives our building automation applications space to breathe, as well as

support via underlying software libraries to simplify and accelerate

new development.

All

of the new commercial controllers have operating systems of some kind.

Some commonly encountered ones are Linux, Android, QNX, or even

Windows. In many cases, these OSes are special compact embedded

versions of their desktop counterparts. While others, such as those

based the Linux Kernel, consist of the exact same code found on a

full-blown PC. That is to say; there is no such thing as Embedded

Linux, only Linux on an embedded device. This particular feature of

Linux offers great advantages when it comes to applications

development. The software can be developed conveniently on a desktop PC

running a Linux distribution such as Ubuntu. An x86 machine running

Linux can easily develop and build software for an embedded ARM target

device using cross-compilation. For example, the native Unix

code

supplied with Sandstar and/or Sedona

can be built and run on a Linux

desktop, cross-compiled and run on the embedded target, or natively

built on the target itself! This is because the native C/C++ code in

these frameworks use the same standard libraries and system calls.

There are only really two major differences between an embedded

operating system and a desktop system:

1)

Embedded operating systems are usually headless. This means

that they

do not have support for a screen, keyboard, or mouse. Because of this,

they don’t need a desktop environment either. This helps to save a

whole lot of space. If you want one, however, there are lightweight

desktops such as LXQT.

2)

Embedded operating systems have the necessary driver framework to

interact with external sensors and I/O in real-time. Just think of it

as a PC with relays, AOs, and AIs. Here is a fantastic write-up

explaining how a user program would interact with I/O.

For

the Edge Controller to be a successful replacement for legacy devices,

we must avoid the pitfalls of using full operating systems. Products

based on apps processors are significantly more complex than a

microcontroller, which runs a single thread and executes code in place.

One of the major issues with apps-based devices is boot time. With

microcontrollers, even those using a lightweight RTOS, the main program

starts running almost immediately when power is applied or restored.

Contrast this to some production edge controllers which can take

several minutes to start executing DDC code. This situation is

completely unacceptable for a field device performing important tasks

such as running a central plant.

A

lot of the delay with DDC start time in these devices has to do with

the control programs running within a virtualized environment such as

Java. Another problem with virtual environments is that the DDC

applications lose the possibility of running in real-time. Remember

that “runs fast” is not the same as “real-time.” Some manufacturers

have already recently begun to address this issue by moving the

time-critical portions of their control engines out of Java.

Virtualization cannot be completely blamed, however. The operating

system itself needs a bit of time to initialize and start running. A

lot of time can be saved by not loading software which is not needed.

Linux builds can be stripped down so they will boot to console very

quickly. Take a look at this Linux build which boots in only one

second.

The

device must incorporate hardware security at boot-time and maintain a

chain-of-trust spanning into user space. This usually involves the very

first code run to be digitally signed and checked by the processor. Now

that our applications have more space and resources to do stuff, so

does malware. Apps and services will need to communicate to remote

stations using secure and open networking protocols, which are mostly

IP based such as HTTPS, and have the ability to directly connect to a

building management database server located on site, in the fog, or in

the cloud without the need for specialized intermediary network routers

or gateways.

As

long as all these potential pitfalls are properly addressed and

avoided, the advantages of an OS equipped edge controller outweigh its

drawbacks. Devices with operating systems are easier to maintain than

ones with only firmware. One ongoing problem that the edge controller

can eliminate is hardware obsolescence due to unsupported firmware.

Often the controls are the first thing replaced in a new building due

to the original vendor’s inability to support them. This shouldn't be

the case with controllers that are only a few years old. We should be

able to maintain these devices for decades if possible. The underlying

system can be updated and maintained by a qualified system

administrator without significantly affecting the functionality of

installed applications and services running on top.

The

question of who should maintain the underlying OS is still up in the

air. In one model we have the device manufacturer or a third party

maintaining the operating system. This was the proposition for the

now-defunct Android Things platform. Google was to provide

Android

support with system updates for a set period of time and would push

these updates to the devices over the air while installing in the

field. Possibly a more viable solution would be for owners to maintain

their own systems. The facility’s OT administrators would be

responsible for maintaining and updating their own platforms. This

would not require so much specialized HVAC and lighting system

knowledge, as these specialists would only be applying changes to the

underlying operating system. They would easily be able to accomplish

this as it is an environment they are familiar with. There are many

community supported Linux distros out there that would be well suited

for such use, such as Debian.

Once

we have a platform with a general purpose operating system rather than

proprietary firmware images, we are free to install and uninstall user

space building automation software as needed and can continue using the

device for many years. An owner would only have to replace and update

the software, rather than completely remove and replace the entire

controller. Installed applications can be paid, or free such as

Sandstar.

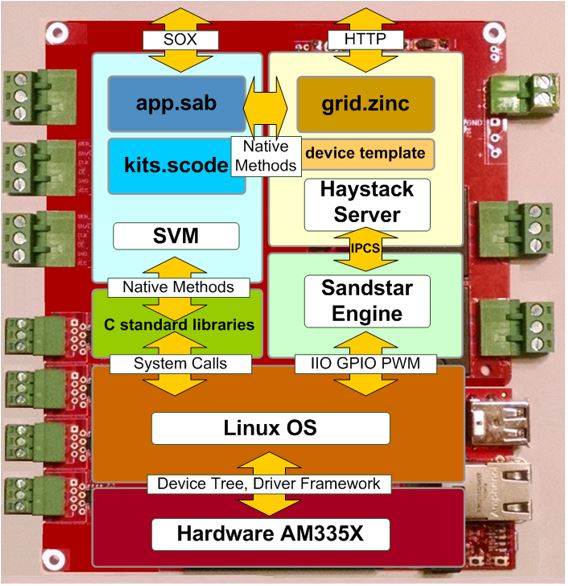

Project

Sandstar provides a good example of what a complete edge controller

system would look like. At the foundation, we have a hardware board

based on the AM335x processor. The entire hardware support for booting

the Linux Kernel on this particular device has been available in

open-source for about ten years now. The demo available on the Sandstar

repository uses Debian as an operating system. However, this demo will

run on any ARMv7-A target, that uses any Linux distro without having to

be re-compiled.

At the very lowest level, we have the Sandstar engine which interacts

with the controller’s I/O and makes updates to a Haystack grid.

Controller points are abstracted to other processes in the form of

channels. Sandstar uses the device driver framework that is already

built into the Kernel. For example, if the engine wishes to read an

Analog Input, it would do so by reading the appropriate ADC input

voltage via the Kernel’s IIO Subsystem. The engine will be able to

identify the available channels and point types by reading the

controller’s device template. The template, which is a .csv file, would

be present in every type of controller and abstracts available I/O

points in hardware. Similarly, the grid is a two-dimensional matrix of

tagged entities that is used to model the controllers physical I/O

points in software. This is why it’s possible to have portable Sedona

apps with Sandstar. On most other Sedona devices, the I/O kits use

native code specifically tailored and created for that device only,

which is why apps are not portable to other devices. Sandstar’s model

does not dictate what physical inputs or outputs should exist on any

platform. Instead, common types of controller points are given in a

generic kit only and abstracted as channels. For example, you can

create an instance of an Analog Input in your Sedona app using the AI

component in the Sandstar kit if it exists. If the point exists on the

controller, then the channel will exist.

[an error occurred while processing this directive]The

Haystack grid serves a second purpose other than providing scalar

control values to the Sedona application. The built-in Haystack server

supplies meta-data directly from the device. In many cases we see

Haystack data being presented not by the actual equipment controller,

but by a proxy device. There is no longer a good reason to do this. The

edge controller, with its increased capability, offers the ability to

present data about the equipment it’s attached to and controlling

directly. This offers two distinct advantages. For one, there is no

need to commission two separate devices relating to the same piece of

equipment. The second much more powerful aspect is the ability to use

tagging not just for information and analytics, but to actually control

the equipment. Consider the use case where a collection of VAV boxes

are served by a particular Air handler, which in turn is being served

by a Chiller. In many proprietary systems, some type of external data

structure must be generated to describe how individual pieces of

equipment should request resources such as “cooling” from one another.

Tags could be used for this purpose. Tags attached to these pieces of

equipment can be used to automatically describe their purposes as well

as their relationship to each other. Currently, existing tags have

individual definitions that are consumable by software. But as of yet,

there is no formal definition of how these standardized tags are

related to each other. Not yet….

Take

a look at working group 551 on the Project Haystack site. There

is an effort underway there to formalize the relationship between

existing tags that can be presented in machine-readable formats.

Someday we won't even need a central BMS server. All management,

control, histories, and analytics will occur in the fog, greatly

reducing bandwidth external to the enterprise. Each controller

will contain its own part of the BMS system, as well as backups of

other parts. Relationships to other equipment on and off-site will be

encoded in the form of tags which will be used for M2M control.

footer

[an error occurred while processing this directive]

[Click Banner To Learn More]

[Home Page] [The

Automator] [About] [Subscribe

] [Contact

Us]