AI and machine learning (ML) are often used interchangeably, but they’re not technically the same thing. However, the difference is smaller than you think, and once you understand it, you’ll never mistake the two again. The following is a very basic explanation and omits many technical aspects of AI vs ML which go beyond the scope of the intended audience. The following definitions and examples attempt to lay a foundation for further exploration around these topics.

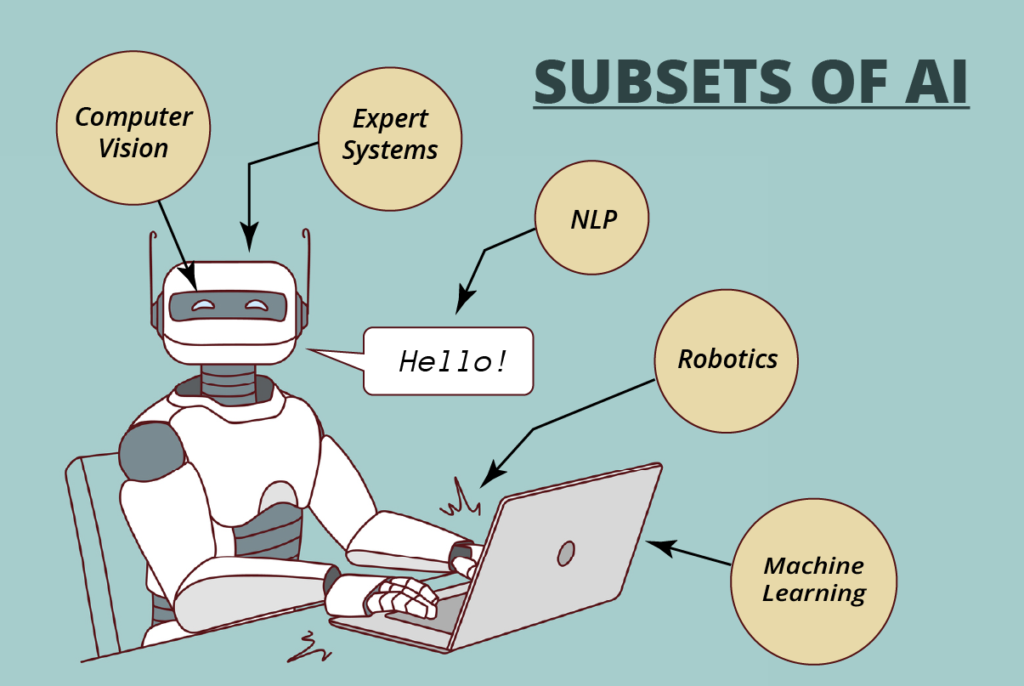

AI encompasses a wide range of technologies and techniques, including natural language processing, computer vision, robotics, and expert systems, in addition to machine learning. Machine learning, on the other hand, is a specific approach to building AI systems, which is based on the idea of enabling machines to learn from data without being explicitly programmed.

Artificial Intelligence: The Entire Robot

Artificial intelligence (AI) is a broad term that refers to creating machines that can perform tasks that normally require human intelligence. Examples of such tasks include visual perception, speech recognition, decision-making, and language translation. There are many subsets and subfields of AI, each of which tries to solve a specific problem and/or takes a different approach to creating “intelligence”. Here are the five most recognized subsets of AI:

- Natural Language Processing (NLP) focuses on enabling machines to understand, interpret, and generate human language. NLP is used in applications such as chatbots, voice assistants, and language translation. ChatGPT is an NLP.

- Computer Vision is concerned with enabling machines to interpret and understand visual data from the world around them. Computer vision is used in applications such as object detection or facial recognition. Autonomous vehicles, like some Tesla models, use computer vision.

- Robotics develops machines that can physically and autonomously interact with the world around them to perform tasks like assembly line work or rescue operations. Boston Dynamics focuses on robotics.

- Expert Systems are designed to mimic the reason-based decision-making ability of an expert in a particular field, such as medical diagnosis or financial analysis. Expert systems are why you keep hearing about AI lawyers defending people in court.

- Machine Learning involves feeding data into a machine learning algorithm and allowing it to learn from that data in order to make accurate predictions or classifications about new data.

So, ML is a subset of AI. That’s the first big difference to note. While AI is a term that encompasses a wide range of technologies and techniques, ML is a specific approach to building AI systems.

It’s helpful to think of AI as the “entire robot”—a fully autonomous machine capable of thinking and acting like a human. However, each subset is only one part of the entire robot. Robotics attempts to develop the “body” for interacting with the environment. Computer vision gives the robot the ability to make visual sense of its world. NLP arms it with the power to communicate. ML bestows the faculty of learning. And expert systems send it to university. It’s a true Frankenstein’s monster of disparate parts, but when brought together will finally realize the goal of AI.

What’s Machine Learning?

You hear a lot about ML because it’s a critical step in creating the entire robot. Almost everything we consider to be alive must be able to learn. Birds do it. Bees to it. Heck, even amoebas do it. But despite its ubiquity in the world of the living, learning is incredibly complex. Therefore, ML is taking on one of the biggest challenges, but it’s a triumph that offers the biggest ROI. Once we create a machine that learns, we can train it to make better decisions. So how do you create a machine to learn?

ML uses statistical algorithms to enable machines to learn from data and improve their performance on specific tasks over time. ML algorithms analyze large amounts of data to identify patterns, which it uses to make predictions or decisions on new data. Like humans, ML is a process that requires that machines be “taught” by exposing them to information.

ML Example: House Price Estimator

Suppose you wanted to create a ML learning algorithm that predicts the price of a house based on its size and location. You would need two sets of data: a training set and a test set. First, we create a training set of data composed of recently sold houses with their sale price and location.

The ML then processes the training data to look for patterns. After some processing, let’s say it “learned” the following “rules”:

- Houses larger than 2,000 sq ft sell for > $200K

- Houses less than 2,000 sq ft sell for < $200K

- Houses within 5 miles of the airport sell for < $100K

- Homes within 5 miles of the lake sell for > $300K

The algorithm could then use this knowledge to predict the price of a house outside the training dataset (i.e., the test set). For example, a house that is:

- 2,500 sq ft and 3 miles from airport.

Since the new house is more than 2,000 sq ft, the algorithm would then apply the “> $200K” rule, but since the it’s also less than 5 miles of the airport, it would apply the “<$100K” rule. Therefore, the algorithm’s prediction would likely be “$150K”.

Next, the ML algorithm checks its guess against the actual price, which is $170K. It now has a $20,000 discrepancy it needs to resolve. It checks for more patterns and learns that, as houses of equal size get closer to the airport, they decrease in price. Through some calculations, the program can determine the changes in price by proximity and apply the data as a weighted value in its next prediction. For example, maybe each mile closer to the airport equates to a 10% decrease in price.

The machine uses this constant process of guessing and checking (called backpropagation) to improve its predictions. The more iterations and inputs, the “smarter” the algorithm gets.

“So what?”, you might ask, “Isn’t this simple logic? Why do we need a machine to do this?” Well, for one, ML can sift through data, find patterns, and test its guesses against real world data at an astonishing rate. In short, it can “learn” much quicker than humans. For another, it can juggle many more parameters than we ever could, so its guesses will inevitably we more accurate over time.

Think about all the factors that go into the price of a house besides size and location. There’s the house’s age, condition, number of rooms, the market conditions, and seller motivation just to name a few. But there are other less typical considerations like current interest rates, lot locations, or roof type. When you drill down further, you find that the actual number of factors is enormous. For example, what about the history of a house or the future of the neighborhood where it resides? The better our predictive capabilities, the more important these “lesser” considerations become.

ML can iterate much faster and with greater detail than we can, making it more efficient at locating such “hidden” patterns. What if dark-colored houses sold for higher prices than light-colored ones? Maybe houses with more east-facing windows were cheaper than more west-facing ones. Machine learning can consider all these factors and then some—and do it in real time.

Finally, imaging adding to this learning algorithm the ability to search for, monitor, and collect house price information for a large region of the country. It would be a fully autonomous learning and predicting machine that would only get smarter the longer it worked. That’s where ML is at today.

Conclusion

It’s easy to see how ML learning algorithms are a game changer for humanity. Their application to knowledge-based work of every kind is almost limitless. What’s AI developers are attempted is the automation of thinking itself. Translate these advantages to building automation, and it’s easy to see how ML will transform the built environment. Imagine AI that could plan your building’s HVAC setpoints a week in advance based on a weekly weather forecast and price predictions for energy costs. What about a FDD system that could predict chiller failure with 98% accuracy?